The Context Problem

Token Economics and the Word That Means Everything

Context, in information science, describes the relational structure that holds meaning in place. AI token economics has turned the concept of context into a billing unit. AI customers, in response, ran with the word context, and started applying context to every AI interaction. Marketing and venture capital companies invented an entire product landscape based upon context, positioning context as every company’s moat, the differentiator.

Meanwhile, foundation AI companies leveraged the context craze, pushing the onus of poor AI performance back on users, blame context quality for AI implementation failures and AI hallucinations . But context has not been defined in a meaningful way, except to say that the cost of using AI is associated with tokens and tokens are directly attached to context and context is what everyone needs to be successful with AI systems.

When commercial AI companies architected the token marketplace, context became the talking stick and the measuring stick, a convenient objective, tied to the outcome and key results. Yet there is little guidance from foundation AI companies, as to what constitutes context. Are we discussing context relative to tokens or context designed for AI reliability? Is it a graph? A markdown file? A YAML format or schema tables? Maybe it’s contextual understanding through a mixed methods approach?

For lack of clarity and agreed upon information architecture patterns, AI token economics has continued to soldier onwards, unchecked and rarely questioned. Secondary context marketplaces have emerged to meet market demand for context. Products, services, consultancies and sages have flooded marketplaces with context- oriented solutions, absent of any consensus as to the meaning and structure of context. And now that context is enmeshed with tokens, everyone wants context because context accounts for the majority of any organization’s AI spend. It’s a downward spiral, with no end in sight.

The Context Pricing Models

The pricing models of large language model APIs charge by the token. As the Technology Policy Institute explains, a token is the smallest unit of information an AI model processes—a snippet of text ranging from a single character to an entire common word—and all inference is metered against these units.1

IBM’s technical reference defines the context window in explicitly economic terms—the amount of text, in tokens, that a model can consider or “remember” at any one time, describing it as “the equivalent of its working memory.” 2 NVIDIA frames the exchange rate by noting that tokens are the currency of AI, and AI services increasingly structure pricing plans around a model’s rates of token input and output.3 Google’s Gemini API documentation states the transaction directly—billing is determined by the number of input and output tokens, with a token equivalent to roughly four characters of text—about 60 to 80 English words per 100 tokens.4

This is an intentional technical arrangement that has fiscal implications. It is also why context began doing more work than any single word should be asked to carry—functioning simultaneously as a noun (the context window), a verb (to contextualize a prompt), and an adjective (contextual AI). That grammatical sprawl has real financial consequences for those paying for it, and structural consequences for anyone trying to build systems that reliably reason.

Context as a Billing Unit

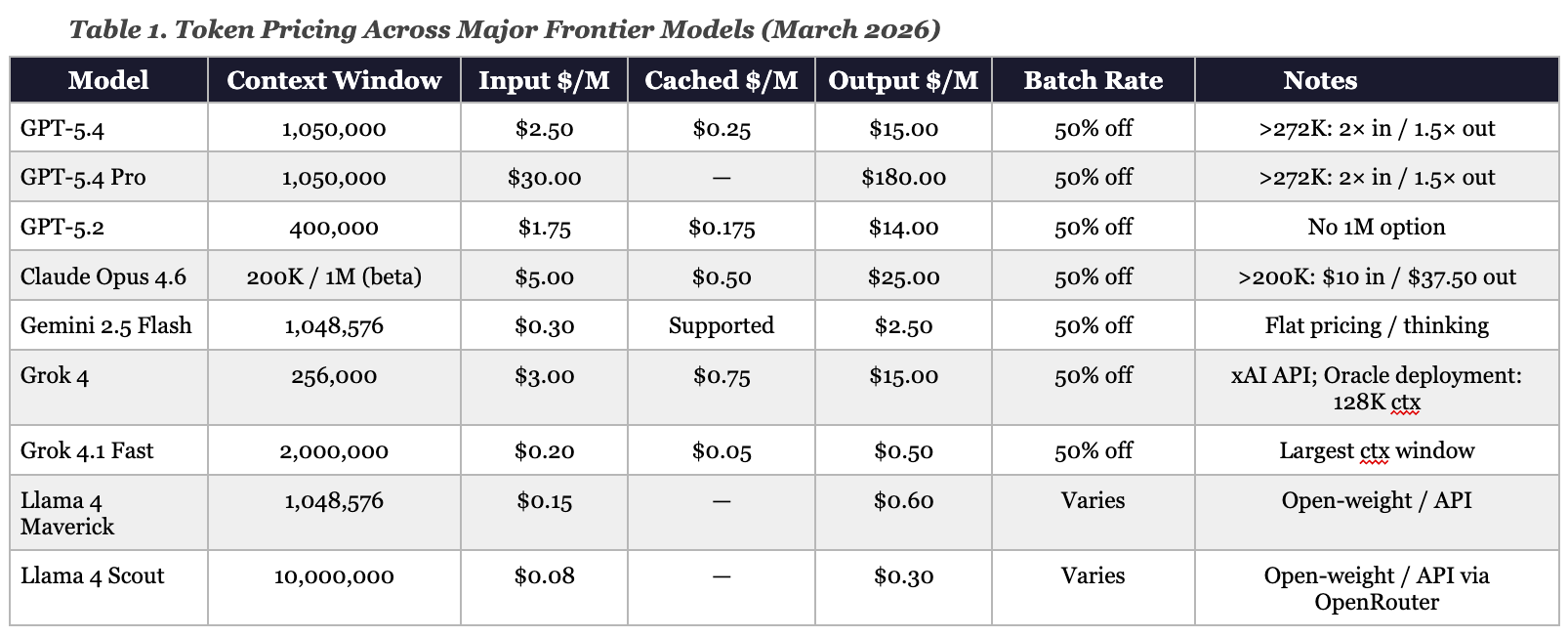

Context window capacity is the primary axis on which frontier AI models are now priced and differentiated. The table below compares current pricing across major models. The spread encodes a market stance—larger context commands higher value, and the most capable reasoning earns a premium rate per token.

Notice how the range across this table is discontinuous. GPT-5.4 Pro output costs $180 per million tokens.5 Gemini 2.5 Flash output costs $2.50 per million tokens.6 That is a 72-fold difference in price for a unit that the market has defined using the word, context. Grok 4.1 Fast, xAI’s long-context model, supports a 2-million-token window at $0.20 input and $0.50 output—pricing that undercuts every comparable window size by a substantial margin.7 Llama 4 Maverick, Meta’s open-weight multimodal model, offers a 1-million-token context window at $0.15 input and $0.60 output via API providers, with the model itself available for self-hosting under a community license.8

GPT-5.4’s context window is 1,050,000 tokens, but pricing is not uniform across that range. Prompts exceeding 272,000 input tokens are charged at twice the standard input rate and 1.5 times the standard output rate for the full session—meaning enterprises running long-context workloads pay both the higher per-token price and a session-wide multiplier once that threshold is crossed.9 Azure enforces these economics through quota tiers keyed to tokens per minute and requests per minute, defined per region, per subscription, and per model or deployment type—with enterprise relationships automatically qualifying for higher tiers.10

Claude Opus 4.6 took a different approach. Anthropic introduced the model’s 1-million-token context window in beta while holding pricing flat from the prior Opus generation at $5 input and $25 output per million tokens, positioning expanded context as a capability improvement within existing price tiers rather than a premium add-on. Requests exceeding 200,000 input tokens trigger both a 2× input surcharge and a 1.5× output surcharge—raising effective rates to $10 input and $37.50 output per million tokens for long-context sessions.11

Llama 4 Scout extends the pricing logic further. Meta’s open-weight model supports an industry-leading 10-million-token context window, with API pricing starting at $0.08 per million input tokens and $0.30 per million output tokens via OpenRouter—among the lowest rates in the frontier model market.12

Grok 4, on xAI’s API, carries a 256,000-token context window priced at parity with Claude Opus 4.6 on output—$3 input, $15 output—while its faster sibling Grok 4.1 Fast offers the 2-million-token window at $0.20 input and $0.50 output, a fraction of that cost.13 Oracle’s documentation for Grok 4 on its cloud infrastructure lists the model at 128,000 tokens of context—half the window available through xAI’s own API—priced on an on-demand basis with cached input tokens available. The same model, two different context ceilings depending on where it is deployed.14

The market has priced capacity—how many tokens the model can see at once. What it has not priced, because there is no mechanism to meter it, is whether the relational structure within those tokens is coherent, navigable, or epistemically sound. The two are not the same, and the vocabulary governing them has collapsed into a single word.

The Context Matrix has One Word

Context-as-noun is the dominant usage in the industry. Cursor’s definition is probably most accurate relative to the industry’s definition of the word—context is your model’s inputs and outputs, like a long list , where the AI model keeps a working memory for the conversation.15 Anthropic’s engineering team extends this to operational practice. Context is “the set of tokens included when sampling from a large-language model,”

Elaborating on context, Anthropic further defines context engineering as “the set of strategies for curating and maintaining the optimal set of tokens during LLM inference.”16 The Technology Policy Institute notes that context windows, measured in thousands of tokens, are the practical constraint that determines what the model can process in a given exchange—separate from training data, which runs in the trillions, and parameters, which run in the billions.17

Context-as-verb appears in AI vernacular whenever practitioners say “give the model context” or “we need to contextualize this input.” The act of contextualizing, in this usage, means prepending background text to a prompt. OpenAI community discussions around customer support systems illustrate the operational stakes. Practitioners debate the optimal ratio of context tokens to generated tokens, weighing how much background the model needs to produce accurate responses without the context volume crowding out available output space.18

The limitation of context-as-verb is that it treats the act of providing information as equivalent to the act of establishing meaning. A model receiving a large block of pasted documents is not the same as a model operating within a structured knowledge representation. The token count rises identically for both, yet the invoice does not distinguish between them.

Context-as-adjective appears in phrases like contextual AI, context-aware systems, and contextual embeddings—using contextual to signal that a system operates on inputs understood within their relational field. A system that knows what you searched for yesterday may call itself context-aware without understanding why you searched, what that query signals about your goals, or how those goals relate to each other over time. The adjective claims relational intelligence while the underlying noun may only deliver statistical proximity.

Grok’s architecture brings the operational version of this problem into pragmatic realities. Data Studios documents that Grok’s context window functions as “the principal memory boundary, dictating how much history, detail, and external knowledge can be included at any given moment”—and that unlike systems with automatic state retention,

Grok places the responsibility on developers to actively manage and resend conversation history within each request.19 This is context-as-noun determining cost; context-as-verb describing the engineering labor of prepending state; and context-as-adjective promising the persistent awareness that neither fully delivers. So we have three grammatical roles channeled through a single with three distinct failure modes.

The engineering response to this instability is fascinating, and points to the strange relationship engineers have with the context problem and context economics. A Hacker News discussion of LLM context compression describes extracting only function signatures, types, and documentation from source code rather than passing full files—reducing a typical function’s token representation from 50 to 8, a 6-fold cost reduction while preserving the structural relationships the model actually needs for architecture reasoning.20

The token savings are direct and measurable. But the semantic concept those tokens represent—relational structure versus raw text volume—lacks distinction in AI-centric vocabularies, because one single word is used to describe context requirements and the costs of using AI.

Capacity Is Not Coherence

The research on how models process large context windows complicates the capacity narrative. Chroma’s 2025 study evaluated 18 LLMs—including GPT-4.1, Claude 4, Gemini 2.5, and Qwen3—and documented what researchers call context rot—as input length grows, model performance degrades, even on deliberately simple and controlled tasks.21 The study held task complexity constant, while varying only the number of tokens, isolating input size as the primary variable. Every model tested showed nonuniform performance degradation as tokens accumulated.

The degradation is not smooth or predictable. Lower semantic similarity between a query and its relevant content accelerates performance decline at longer input lengths. A single distractor—text that is topically related but does not answer the question—measurably reduces accuracy compared to a baseline with no distractors, and four distractors compound the decline further.22

Most counterintuitively, shuffled haystacks—context whose sentences were randomly reordered, destroying logical flow—consistently produced better retrieval performance than logically organized context across all 18 models. Models perform worse when the surrounding text has coherent narrative structure than when it is a pile of randomly ordered sentences. The market sells coherence through capacity. The research shows that more tokens can actively degrade coherent performance.

Anthropic’s engineering team frames the architectural mechanism by capturing that LLMs use transformer architectures, where every token attends to every other token, producing n² pairwise relationships for n tokens. As context length grows, the model’s ability to capture all pairwise relationships gets “stretched thin, creating a natural tension between context size and attention focus.”23 Models have less training experience with long sequences because shorter sequences dominate training data. The attention budget is finite and depletes with each token added—more tokens produce more competition for a limited attentional resource, instead of more reasoning capacity.

Meta’s own documentation of Llama 4 Scout’s 10-million-token context window comes with a critical qualification buried within independent reporting—processing 1.4 million tokens of context requires eight NVIDIA H100 GPUs, and early users reported that effective context degraded at around 32,000 tokens in practice.24 The theoretical maximum and the operational reality diverge by a factor of 300. Data Studios’ analysis of Meta AI confirms the gap at the user level.

Despite underlying Llama 4 models offering multi-million-token theoretical capacities, the practical user experience operates through a rolling, relevance-weighted buffer, with earlier turns dropped or compressed as conversations grow.25

Grok’s architecture exhibits the same constraint but in a different form than competitors. Its token accounting encompasses not only visible chat messages but also system formatting, tool invocation scaffolding, reasoning tokens, and multimodal image encoding—with a single image consuming hundreds to nearly 2,000 tokens depending on dimensions.26 Every tool call, retrieved document and reasoning trace subtracts from the available context budget for actual dialogue or persistent agent state. The 2-million-token window Grok 4.1 Fast advertises is the theoretical ceiling and not the working capacity for any given real-world application.

OpenAI community practitioners who are building production systems have illuminated this tension between tokens and context, pointing to the impracticality of sending the entire conversation history on every request, and noting that aggressive summarization degrades model interaction quality.27 Every enterprise running long-horizon agent systems faces the same calculation—and nothing in the word context guides the decision about what to preserve and what to discard.

What is Context?

Context, in its Latin root contextus, means the act of joining together—from con- (with) and texere (to weave). The etymology encodes a relational theory of meaning. Context is not a container. It is the fabric that holds meaning in place by connecting one element to another. Remove the relationships and what remains is noise with a token count. Plenty of technologists will argue that the word context has new meaning, and that the definition must be updated to reflect the use of of the term, in the context of AI.

By way of IBM’s definition for context window as working memory, context is what “determines how long of a conversation” a model can carry out “without forgetting details from earlier in the exchange.”28 The word forgetting acknowledges that the context window is not neutral storage. The context window is in fact, an active constraint on what relationships persist across time. When the window is exhausted, the model loses the threads responsible for connecting what it knew, to what it is now being asked.

If we simplify the meaning of the word and orient the idea of context as applied to a tokenized window, context acts as a constraint on meaning. When a term appears in a sentence, context narrows what that term must mean in that setting—not by expanding the surrounding information, but by establishing which relational frame is active.

Let’s work through an example, with the word bank. In a sentence about rivers, bank means a physical embankment. The word does not require a larger window to be interpreted correctly; it requires that the relational field—conversation about rivers, not finance—is established and held. That establishment is what context structurally provides. A larger token window is one mechanism for holding more of the relational field in scope but it is not the relational structure itself.

To understand how a context window works, it is important to establish that language models operate on co-occurrence statistics. TPI explains that tokenization methods like Byte Pair Encoding progressively merge frequent character pairs to form tokens, enabling the model to learn distributional relationships between sequences.29 NVIDIA describes training as a process of showing the model token sequences and asking it to predict the next token, with parameters updated based on prediction accuracy.30

Statistical adjacency is useful as it produces outputs that often appear contextually grounded. But statistical proximity is not relational structure. A model may correctly interpret bank as a riverbank without having represented the semantic relationship between that word and its surrounding concepts in any form that could be queried, governed, or reused across inference sessions.

Presenting Context

Chroma's researchers found “what matters more is how that information is presented” rather than if it is present in the window.31 And when we understand that the context problem is really about how information is presented, the logical context-driven solution may very well be neurosymbolic structures.

Knowledge graphs, ontologies, and structured vocabularies are types of neurosymbolic AI and have existed for decades, so this is not a new idea. It is the foundational premise of neurosymbolic AI—what researchers in the field call Good Old-Fashioned AI, or GOFAI—in which hand crafted rules, logic-based algorithms, and formal knowledge structures do the work of constraining inference while supporting logical reasoning.32 Neurosymbolic AI, the field positioned as the antidote to LLM unreliability, combines a symbolic backbone with neural pattern matching. Knowledge graphs and ontologies are the symbolic component. The LLM is the neural one.33

The economic benefits of neurosymbolic knowledge structures makes this form of context super compelling. Passing a well-formed knowledge graph subgraph into a context window delivers more inferential signal per token than passing equivalent raw text, because the relationships are already explicit—the model does not need to reconstruct them statistically. Research presented at the Workshop on Generative AI and Knowledge Graphs documented an 80% decrease in token usage when graph-based retrieval replaced conventional vector RAG methods in financial document processing, with contradiction detection complexity reduced by a factor of 734. 34

A 2025 study on ontology-guided knowledge graph construction found that ontologies derived from relational databases reduced overall LLM usage costs substantially, because the ontology learning process is performed once and does not require repeated inference to maintain.35

The SubgraphRAG framework demonstrated that smaller models running over structured subgraphs matched the accuracy of much larger models running over raw text—the same reasoning outcome at a fraction of the token cost.36 Structured knowledge is not a supplement to context engineering. It is what context engineering is attempting to approximate when the underlying knowledge architecture has not been built.

With a crowded field of vendors and practitioners vying for attention while feverishly racing towards “winning” in the AI space, context has become a competitive landscape. Vendors, VC firms, practitioners and consultancies are all pitching their versions of context, and the range of solutions is noisy and sprawling. The one thing everyone can agree upon is tokens and context windows. The new domain role, context engineering, is now entering the fray, just in time, to save the day.

Context Engineering as Triage

The emergence of context engineering as a named discipline is a candid acknowledgment that the context window requires active management. Anthropic’s engineering team defines it as “the art and science of curating what will go into the limited context window from that constantly evolving universe of possible information,” describing it as the natural evolution of prompt engineering as applications move from one-shot tasks to agents operating across multiple turns of inference.37 The guiding principle is finding “the smallest possible set of high-signal tokens that maximize the likelihood of some desired outcome”—a formulation that identifies less context as the engineering goal, inverting the marketing premise that larger windows produce better results.

The underlying conditions of the context window has led to techniques aimed at managing the context window, as it accumulates noise, and coherence degrades. Compaction summarizes conversation history and reinitializes the context, usingg the compressed version, while preserving conversational flow, at the cost of some fidelity. Structured note-taking persists critical agent state outside the window—and retrieves it on demand—rather than holding everything in context continuously.

Sub-agent architectures isolate focused tasks in clean context windows and return only distilled summaries to the coordinating agent—with Anthropic’s multi-agent system returning 1,000 to 2,000 tokens of summary from sub-agents that may have consumed tens of thousands of tokens in their local contexts.38

Grok’s developer documentation describes the same set of pressures from the operational side. Architects managing Grok deployments for real-time chat must decide how many prior messages to replay, when to replace verbatim history with higher-level summaries, and how to allocate token budget amongst user turns, tool outputs, and environmental state—with each addition of retrieved documents or tool results directly reducing the space available for dialogue.39 This is not a Grok-specific limitation. Every model in the table above faces it. The 2-million-token window of Grok 4.1 Fast delays the accounting problem but it does not eliminate it.

Each of these context engineering techniques has direct cost implications. Compaction reduces token count on subsequent calls and therefore the invoice. Structured note-taking replaces context-window storage with cheaper external storage. Sub-agent architectures trade orchestration overhead for context efficiency. OpenAI’s tool search feature for GPT-5.4, which allows the model to fetch tool definitions on demand rather than loading all definitions upfront, reduced total token usage by 47% in benchmark testing.40

NVIDIA frames the production economics of token management in terms of three metrics—time to first token, inter-token latency, and throughput—each representing a tradeoff that developers must balance against the specific demands of their application.41 Token efficiency is now a design discipline. Context engineering has emerged because marketed capacity does not deliver coherence automatically, and the gap between the marketing promises and the cost ,carries a price.

The Economics of Context

In reality, the market rewarded the wrong definition at the wrong moment or the right moment, depending on your outlook. Especially given that AI collapse equals economic collapse, given the state of the US economy. When commercial AI companies began competing on context window size, they organized an entire industry’s vocabulary around a technical parameter—tokens-per-window—that measures capacity rather than coherence. Enterprise purchasing criteria now hinge on context window size rather than on how knowledge is structured, how relationships are represented, or how meaning persists across inference sessions.

The oppositional forces of context window size and knowledge quality are different questions and focus on producing different systems. These opposing priorities do not appear in token count or line-item costs, but it accumulates in the quality of reasoning the system produces over time.

The pricing table above makes this concrete. GPT-5.4 Pro at $180 per million output tokens costs 72 times more than Gemini 2.5 Flash at $2.50 per million output tokens—and 360 times more than Grok 4.1 Fast at $0.50 per million output tokens across a 2-million-token window. Llama 4 Scout’s 10-million-token context window is available open-weight, with API providers charging as little as $0.08 per million input tokens and $0.30 per million output tokens.

The market has produced a wide range of capacity options at a wide range of prices. However, it has not produced a standard for what context means in these transactions—a standard that would let buyers compare window size and semantic quality.

For now, many organizations and practitioners are dealing with what has been coined context rot. Context rot is the technical term for what happens when more tokens produce less understanding.42 Chroma’s researchers conclude that “even the most capable models are sensitive to how information is presented, making effective context engineering essential for reliable performance.”43 IBM’s working memory analogy is the most honest available—memory without structure is forgetfulness waiting to happen. The size of the memory does not determine the quality of the recall. The organization of what is held there does.

In a normal marketplace, charging for a unit whose quality the seller controls, defines, and declines to warrant, would register as a structural red flag for economists. The applicable framework is George Akerlof’s 1970 theory of the market for lemons—when sellers know more about quality than buyers, buyers discount the entire market, and sellers with real quality cannot price it.44 AI context pricing embeds this structure at the unit level. Foundation AI companies define the token, control the mechanisms that determine whether those tokens cohere, and bear no disclosure obligation regarding coherence. Buyers cannot inspect semantic quality before purchase. The invoice reports how many tokens were consumed yet fails to report whether the context within those tokens was navigable, epistemically sound, or degrading as the window accumulated context.

The lemons analogy does not require that AI companies are knowingly selling defective goods. A lemon is qualified when no mechanism exists by which buyers can detect or signal the difference between a well-structured context and an incoherent one at the same price. This information asymmetry aptly describes the current conditions. Akerlof’s insight is that this structural opacity is sufficient to degrade a market, regardless of intent. In the United States, no statute currently governs AI output quality or context coherence disclosures.

The FTC Act’s prohibition on unfair or deceptive practices alongside state-level consumer protection statutes provides theoretical jurisdiction over misleading capability claims, but vendor contracts universally disclaim any warranty of fitness for a particular purpose, and no case law has tested context quality as a cognizable defect.45 The EU AI Act’s transparency requirements for high-risk systems extend to offer protections, but do not address context coherence specifically.46 The market for tokens is, for now, a market without a lemon law.

The sharper technical term for the current state of context and token economics is credence good—a category formalized by Darby and Karni to describe goods whose quality cannot be verified even after consumption.47 One can evaluate output quality after the fact, but cannot verify whether that output reflects coherent processing of the full context window or stochastic, plausible-sounding inference from a fraction of context input. The seller’s informational advantage is a permanent fixture, with seemingly moving targets and ever-evolving user requirements. Under FTC doctrine, a material claim likely to mislead a reasonable consumer constitutes a deceptive trade practice.48

Because the marketing of the “context window” implies coherent, accessible, usable working memory—technology vendors and AI companies alike, market it that way. The empirical record, including the context rot findings discussed above, shows degraded performance well within the marketed capacity range. The gap between the marketed claim and the delivered product is what the FTC standard was designed to address.

The saving grace and polite framing for the mismatch between reality and marketing pitches is “emerging market with immature disclosure norms.” But if we are being honest with ourselves, AI company products are a credence good, sold with performance claims that the seller controls and the buyer cannot audit, in a market with no standardization of the unit being priced.

What About the Vendors?

Vendors and enterprises are not operating from the same incentive structure, but they have arrived at the same frenzy around context. For vendors, context window size is a product differentiator and a revenue mechanism—larger windows command premium pricing, and the tiered surcharge structures documented in the table above ensure that the most demanding workloads generate the highest margins. For enterprises, context is the cost of keeping AI systems functional. Token spend is where the AI budget tends to go when knowledge infrastructure has not been built.

The context associated with tokens most commonly exists as raw documents without an organizational backbone, conversation histories pasted into windows instead of managed and sometimes access to database tables without a lick of semantics to be found. And in all of these instances, organizations are expecting the model to reconstruct relationships that were never formally represented.

AI companies and vendors both call this context but neither side has agreed on what context should mean, or what success looks like when it is managed correctly. Gartner’s March 2026 survey of 353 data and analytics leaders found that fewer than one in five are even concerned that uncertain costs will limit AI value—and only 44% of organizations have adopted financial guardrails or AI FinOps practices to manage token spend.49 And when the invoice arrives, there is no framework for interpreting it.

The advisory signal has been consistent, echoing the need for context. Gartner identified three pillars for deriving value from AI at its March 2026 Data and Analytics Summit:

A clear AI ambition tied to return on intelligence.

Trusted data systems and governance as the foundation for return on integrity.

People equipped with the skills to execute.50

At the same summit, Gartner’s Chief of Research named composite and neurosymbolic AI—the combination of data-driven and knowledge-driven techniques—as one of three scenarios shaping the future of AI and data.51 Gartner’s top data and analytics predictions for 2026 named context explicitly as one of AI’s defining impact areas, and warned that ungoverned decisions using large language models will cause financial or reputational loss for enterprises in the near term.52

Gartner also projected that by 2030, 50% of AI agent deployment failures will trace back to insufficient governance and interoperability—not to model capabilities.53 The institutional guidance points in one direction while the market investment points in another. In the gaps between the context marketing and token economics , every organization is engineering context in its own way, measuring success against its own criteria, and paying for tokens with little concern for what they are being billed for. While it is only logical that organizations go the way of neurosymbolic AI and semantic knowledge infrastructures, it is anyone’s guess given the slippery nature of context.

Conclusion

When a word becomes a billing unit, the concept associated with the word can quickly lose meaning. Context in contemporary AI marketing now gestures at capacity (how much the model can see), process (the act of providing information), and quality (the claim that relationships are understood). These are three distinct things captured under the context umbrella. Treating them as one produces systems that are large, fast, and frequently confused about what they know and how to communicate meaning.

Structurally, context ultimately requires weaving—the explicit representation of how one piece of information relates to another, persistent enough to survive compression, navigable enough to be queried, and exact enough to constrain inference. That context work does not appear on any token invoice. Ultimately, a 10-million-token window with poorly organized context is a very expensive way to introduce noise.

Footnotes

Nathaniel Lovin, Scott Wallsten, and Sarah Oh Lam, "From Tokens to Context Windows: Simplifying AI Jargon," Technology Policy Institute, March 6, 2024, https://techpolicyinstitute.org/publications/artificial-intelligence/from-tokens-to-context-windows-simplifying-ai-jargon/.

“What Is a Context Window?” IBM Think, updated 2025, https://www.ibm.com/think/topics/context-window.

Dave Salvator, “Explaining Tokens — the Language and Currency of AI,” NVIDIA Blog, March 17, 2025, https://blogs.nvidia.com/blog/ai-tokens-explained/.

“Gemini 2.5 Flash,” Google AI for Developers, https://ai.google.dev/gemini-api/docs/models/gemini-2.5-flash.

"GPT-5.4 Deep Dive: Pricing, Context Limits, and Tool Search Explained," OpenAI Developer Community, March 5, 2026; confirmed via OpenRouter, openrouter.ai/openai/gpt-5.4-pro.

"Gemini 2.5 Flash," Google AI for Developers, ai.google.dev/gemini-api/docs/pricing; confirmed via OpenRouter, openrouter.ai/google/gemini-2.5-flash.

"Grok API Pricing: Models, Costs & Comparisons," mem0.ai, March 3, 2026, mem0.ai/blog/xai-grok-api-pricing; confirmed via OpenRouter, openrouter.ai/x-ai/grok-4.1-fast.

Meta AI, "The Llama 4 Herd: The Beginning of a New Era of Natively Multimodal AI Innovation," April 5, 2025, ai.meta.com/blog/llama-4-multimodal-intelligence/; API pricing via OpenRouter, openrouter.ai/meta-llama/llama-4-maverick.

"GPT-5.4 Model," OpenAI API Documentation, developers.openai.com/api/docs/models/gpt-5.4.

"Azure OpenAI in Microsoft Foundry Models Quotas and Limits," Microsoft Learn, learn.microsoft.com/en-us/azure/foundry/openai/quotas-limits.

"Introducing Claude Opus 4.6," Anthropic, February 5, 2026, anthropic.com/news/claude-opus-4-6; Anthropic Pricing Documentation, platform.claude.com/docs/en/about-claude/pricing.

Meta AI, "The Llama 4 Herd," April 5, 2025; API pricing via OpenRouter, openrouter.ai/meta-llama/llama-4-scout.

"Grok API Pricing: Models, Costs & Comparisons," mem0.ai, March 3, 2026; xAI Models and Pricing, docs.x.ai/developers/models.

"xAI Grok 4," Oracle Cloud Infrastructure Generative AI Documentation, 2025, docs.oracle.com/en-us/iaas/Content/generative-ai/xai-grok-4.htm. Context window on Oracle's deployment is listed at 128,000 tokens; xAI's own API lists Grok 4 at 256,000 tokens.

Cursor. (n.d.). Working with context. Cursor Documentation. Retrieved March 11, 2026, from https://docs.cursor.com/guides/working-with-context

Prithvi Rajasekaran et al., “Effective Context Engineering for AI Agents,” Anthropic Engineering, September 29, 2025.

Lovin, Wallsten, and Oh Lam, “From Tokens to Context Windows.”

Razvan I. Savin, “Understanding Context Tokens and Generated Tokens Ratio,” OpenAI Developer Community, April 9, 2024.

“Grok Context Window and Token Limits in Real-Time Interactions,” Data Studios, February 28, 2025.

Jeff-Lee, “Show HN: Brf.it – Extracting Code Interfaces for LLM Context,” Hacker News, March 2026.

Kelly Hong, Anton Troynikov, and Jeff Huber, “Context Rot: How Increasing Input Tokens Impacts LLM Performance,” Chroma Research, July 14, 2025.

Ibid.

Ibid.

Ibid.

“Meta AI Context Window: Token Limits, Context Retention, Conversation Length, and Memory Handling,” Data Studios, February 10, 2025

Data Studios, “Grok Context Window and Token Limits in Real-Time Interactions.”

Reza6, “Best Practices for Cost-Efficient, High-Quality Context Management in Long AI Chats,” OpenAI Developer Community, February 11, 2026.

“What Is a Context Window?” IBM Think, updated 2025. [ibid fn. 2]

Lovin, Wallsten, and Oh Lam, “From Tokens to Context Windows.”

Salvator, “Explaining Tokens — the Language and Currency of AI.”

Hong, Troynikov, and Huber, “Context Rot.”

Ajith Vallath Prabhakar, "Neuro-Symbolic AI: Foundations, Benefits, and Real-World Applications," July 27, 2025, ajithp.com/2025/07/27/neuro-symbolic-ai-multimodal-reasoning/. "Symbolic approaches dominated early AI in the mid-20th century, and their architecture included hand-crafted rules, logic-based algorithms, and knowledge engineering (the era of 'good old-fashioned AI' or GOFAI)."

Michael Delong et al., "Neurosymbolic AI for Reasoning over Knowledge Graphs," arXiv:2302.07200, February 2023. Survey of neurosymbolic approaches combining symbolic reasoning with deep learning using knowledge graphs and OWL ontologies as the formal symbolic layer.

Mariam Barry et al., "GraphRAG: Leveraging Graph-Based Efficiency to Minimize Hallucinations in LLM-Driven RAG for Finance Data," Proceedings of the Workshop on Generative AI and Knowledge Graphs (GenAIK), Abu Dhabi, January 2025, aclanthology.org/2025.genaik-1.6/.

Tiago Cruz et al., "Ontology Learning and Knowledge Graph Construction: A Comparison of Approaches and Their Impact on RAG Performance," arXiv:2511.05991, November 2025, arxiv.org/html/2511.05991v1.

Mufei Li, Siqi Miao, and Pan Li, "Simple Is Effective: The Roles of Graphs and Large Language Models in Knowledge-Graph-Based Retrieval-Augmented Generation," ICLR 2025, arXiv:2410.20724, arxiv.org/abs/2410.20724.

Rajasekaran et al., “Effective Context Engineering for AI Agents.”

Ibid.

Data Studios, “Grok Context Window and Token Limits in Real-Time Interactions.”

“GPT-5.4 Deep Dive,” OpenAI Developer Community, March 5, 2026.

Salvator, “Explaining Tokens — the Language and Currency of AI.”

Hong, Troynikov, and Huber, “Context Rot: How Increasing Input Tokens Impacts LLM Performance,” Chroma Research, July 14, 2025.

ibid.

George A. Akerlof, “The Market for ‘Lemons’: Quality Uncertainty and the Market Mechanism,” Quarterly Journal of Economics 84, no. 3 (August 1970): 488–500.

Federal Trade Commission, "FTC Issues Guidance on AI Claims," 2023, ftc.gov. The FTC Act Section 5 prohibits unfair or deceptive acts or practices in commerce; enforcement against AI capability claims remains an active but untested priority area.

European Parliament, Regulation (EU) 2024/1689 of the European Parliament and of the Council (EU AI Act), June 13, 2024, eur-lex.europa.eu. Transparency and documentation obligations apply to high-risk AI systems under Articles 13 and 16 but do not specify context quality or semantic coherence as disclosure categories.

Michael R. Darby and Edi Karni, “Free Competition and the Optimal Amount of Fraud,” Journal of Law and Economics 16, no. 1 (April 1973): 67–88.

Federal Trade Commission, “Policy Statement on Deception,” October 14, 1983, appended to In re Cliffdale Associates, Inc., 103 F.T.C. 110 (1984).

Adam Ronthal and Georgia O’Callaghan, “Gartner Identifies Three Pillars for Deriving Value from AI,” Gartner Newsroom, March 9, 2026, gartner.com/en/newsroom/press-releases/2026-03-09-gartner-identifies-three-pillars-for-deriving-value-from-ai; reported via HPCwire, hpcwire.com/aiwire/2026/03/09/gartner-identifies-3-pillars-for-deriving-value-from-ai/.

Ibid. The three pillars as identified: AI ambition (return on intelligence), strong AI foundations and trusted data governance (return on integrity), and people empowerment (return on individuals).

Erick Brethenoux, “Future of AI Trends,” Gartner Data and Analytics Summit Day 2, Orlando, March 10, 2026; reported in “Gartner Data & Analytics Summit 2026 Day 2 Highlights,” gartner.com/en/newsroom/press-releases/2026-03-10-gartner-data-and-analytics-summit-2026-orlando-day-2-highlights. Composite and neurosymbolic AI—”AI composed of multiple data-driven and knowledge-driven techniques”—was identified as one of three future AI trends.

Rita Sallam, “Gartner Announces Top Predictions for Data and Analytics in 2026,” Gartner Newsroom, March 11, 2026, gartner.com/en/newsroom/press-releases/2026-03-11-gartner-announces-top-predictions-for-data-and-analytics-in-2026. Context is named explicitly as one of AI’s impact areas; the release states that “ungoverned decisions using LLMs will cause financial or reputational loss for enterprises.”

Ibid. “By 2030, 50% of AI agent deployment failures will be due to insufficient AI governance platform runtime enforcement for capabilities and multisystem interoperability.”

about me. I’m an information architect, semantic strategist, and lifelong student of systems, meaning, and human understanding. For over 25 years, I’ve worked at the intersection of knowledge frameworks and digital infrastructure—helping both large organizations and cultural institutions build information systems that support clarity, interoperability, and long-term value.

I’ve designed semantic information and knowledge architectures across a wide range of industries and institutions, from enterprise tech to public service to the arts. I’ve held roles at Overstock.com, Pluralsight, GDIT, Amazon, System1, Battelle, the Oregon Health Authority, and the Department of Justice and Adobe.

I currently consult for large enterprises and startups, wanting to successfully ealize AI-ready knowledge infrastructures. Sticking around for the wins is my favorite part. I also teach how to build semantic knowledge infrastructures through The Knowledge Graph Academy.

Throughout the years, I’ve worked at GLAM organizations (Galleries, Libraries, Archives, and Museums), including the Smithsonian Institution, The Shoah Foundation for Visual History, Twinka Thiebaud and the Art of the Pose, Nritya Mandala Mahavihara, the Shogren Museum, and the Oregon College of Art and Craft. The GLAM work teaches us why preserving knowledge is critical for AI and society.

And through it all, I am a librarian.

More from Intentional Arrangement

Relationships and Knowledge Systems

We rarely think about this consciously, but every time we organize anything—a bookshelf, a database, a filing system, a website—we’re making decisions about how things connect. These decisions have consequences that ripple outward, shaping what we can find, what we can ask, and what insights remain forever hidden from us.

Where Provenance Ends, Knowledge Decays

I am an AI realist, not a skeptic. I work with AI systems every day. I build the knowledge infrastructure that makes them useful. I understand what large language models can do, and I appreciate thei…

Hello Jessica,

Brilliant, extraordinary assimilation of knowledge ! But I approve more of linguistic analysis, and more particularly the very inspired grammatical analysis, than economic analysis.

I had already seen the name of Akerlof, but it seems logical to me that sellers (of lemons) know more (about lemons) than consumers, even if consumers have made a lot of progress. It reminds me of a microeconomics class where I asked a rather well-known professor presenting a simplistic theory of markets, where sellers and buyers set the right price based on perfectly distributed information (perhaps inspired by Walras), what influence did advertising have on consumer information. It was late and he had promised to respond the next day, but he never did.

Akerlof's theory is therefore the opposite since it highlights information asymmetries, but his example seems a bit specious to me, although there may have been such cases in the United States at the time he described this mechanism. It’s nevertheless very smart of you to have invoked it because there is a great deal of opacity about the way models work, but even for suppliers :). That is why they cannot commit to consistency.

Moreover, the increase in the size of context windows initially responded to a pressing demand from users and offered them a clear pricing advantage (price per token, for example between GPT-5.2 and GPT-5.4), even if it is an incentive to consume.

On the other hand, suppliers may not have an interest in bearing "context rot" for too long because if one does not know their economic model, it seems that they themselves bear most of the cost. And the market might move towards more economical and efficient solutions in a « context » where everyone tinkers, as you mentioned just before the conclusion.

"Context rot" - thanks for the new word! Really helpful "big picture" perspective.

A simple takeaway - context will always get added, somewhere, by the user, by the model, or somewhere in between. How well it's managed (a rarity) determines the cost and reliability. Does that make sense?

One more random takeaway - "reports" in the BI world seem equivalent to "prompts" in the AI world. A good BI solution reduces report logic by pushing consensus business logic lower in the architecture. It's a metaphor :)

ZH